Difference between revisions of "Performance"

(→Memory Utilization) |

(technical edits) |

||

| (5 intermediate revisions by one user not shown) | |||

| Line 8: | Line 8: | ||

=== Memory Utilization === | === Memory Utilization === | ||

| − | ZFS uses memory caching to improve performance. Filesystem meta-data, data, and the | + | ZFS uses memory caching to improve performance. Filesystem meta-data, data, and the de-duplication Tables (DDT) are stored in the ARC, allowing frequently accessed data to be accessed many times faster than possible from hard disk media. The performance that you experience with ZFS can be directly linked to the amount of memory that is allocated to the ARC. |

| − | O3X uses Wired (non-pageable) memory for the ARC. Activity Monitor and | + | O3X uses Wired (non-pageable) memory for the ARC. Activity Monitor and top can display the total amount of Wired memory allocated. O3X is not the only user of wired memory, it is possible to examine the amount of memory (measured in bytes) in use by O3X by issuing the command: |

| − | ::$ | + | ::$ sysctl kstat.spl.misc.spl_misc.os_mem_alloc |

| − | Note that this value is more than likely greater than the configured maximum ARC size due to overheads in the allocator itself. | + | Note that this value is more than likely greater than the configured maximum ARC size due to overheads in the allocator itself. By way of example on a 32GB iMac with the ARC set for 8GB. |

| + | |||

| + | ::$ sysctl kstat | grep arc_max | ||

| + | ::kstat.zfs.darwin.tunable.zfs_arc_max: 8589934592 | ||

| + | ::$ sysctl kstat.spl.misc.spl_misc.os_mem_alloc | ||

| + | ::kstat.spl.misc.spl_misc.os_mem_alloc: 11866734592 | ||

Over the last several releases O3X has evolved the memory allocator technology in the SPL to closely match the Open Solaris allocator. This is has been driven by the OSX kernel allocator not being able to meet the demanding requirements of a full ZFS implementation. O3X 1.3.1 will by default allow the ARC to occupy most of your computers physical memory. In parallel with that it has a mechanism that detects when the computer is experiencing memory pressure and will release memory back to the OS for other purposes. This mechanism has been extensively tested, but may not be suitable for everyone. | Over the last several releases O3X has evolved the memory allocator technology in the SPL to closely match the Open Solaris allocator. This is has been driven by the OSX kernel allocator not being able to meet the demanding requirements of a full ZFS implementation. O3X 1.3.1 will by default allow the ARC to occupy most of your computers physical memory. In parallel with that it has a mechanism that detects when the computer is experiencing memory pressure and will release memory back to the OS for other purposes. This mechanism has been extensively tested, but may not be suitable for everyone. | ||

| Line 21: | Line 26: | ||

::$ sudo sysctl -w zfs.arc_max=<arc size in bytes> (O3X 1.3.0) | ::$ sudo sysctl -w zfs.arc_max=<arc size in bytes> (O3X 1.3.0) | ||

| − | With the final release of O3X 1.3.1 will come a persistent configuration mechanism. Adding the following line to /etc/zfs/zsysctl.conf will cause the change to be automatically applied whenever the O3X kexts are loaded. | + | With the final release of O3X 1.3.1 (not 1.3.1-RC5) will come a persistent configuration mechanism. Adding the following line to /etc/zfs/zsysctl.conf will cause the change to be automatically applied whenever the O3X kexts are loaded. |

::kstat.zfs.darwin.tunable.zfs_arc_max=<size in bytes of arc> | ::kstat.zfs.darwin.tunable.zfs_arc_max=<size in bytes of arc> | ||

| Line 27: | Line 32: | ||

::$ arcstat.pl 1 | ::$ arcstat.pl 1 | ||

| − | If you are trying to reduce the amount of memory used by O3X | + | If you are trying to reduce the amount of memory used by O3X "right now", there are two steps. First reduce the maximum ARC size to a value of your choosing: |

::$ sudo sysctl -w kstat.zfs.darwin.tunable.zfs_arc_max=<size of arc in bytes> | ::$ sudo sysctl -w kstat.zfs.darwin.tunable.zfs_arc_max=<size of arc in bytes> | ||

| Line 35: | Line 40: | ||

The second operation forces the SPL memory allocator to be reaped, which will release memory from the internal caches to the OS. This is a fairly coarse mechanism and may have to be repeated several times to achieve the desired effect. You can monitor the Activity Monitor, top and/or arcstat.pl to determine whether you have released sufficient memory. Note that this will likely result in ARC being further pressured, and commensurate amounts of cached data being evicted from main memory. | The second operation forces the SPL memory allocator to be reaped, which will release memory from the internal caches to the OS. This is a fairly coarse mechanism and may have to be repeated several times to achieve the desired effect. You can monitor the Activity Monitor, top and/or arcstat.pl to determine whether you have released sufficient memory. Note that this will likely result in ARC being further pressured, and commensurate amounts of cached data being evicted from main memory. | ||

| − | We keep our ARC implementation in alignment with the Illumos code. There have recently been some performance tuning changes to Illumos ARC, these will flow through to O3X | + | We keep our ARC implementation in alignment with the Illumos code. There have recently been some performance tuning changes to Illumos ARC, these will flow through to O3X in a future release. |

== Current Benchmarks == | == Current Benchmarks == | ||

Revision as of 06:53, 8 February 2015

Status

Currently OpenZFS on OS X is active development, with priority being given to stability and integration enhancements, before performance.

Performance Tuning

Memory Utilization

ZFS uses memory caching to improve performance. Filesystem meta-data, data, and the de-duplication Tables (DDT) are stored in the ARC, allowing frequently accessed data to be accessed many times faster than possible from hard disk media. The performance that you experience with ZFS can be directly linked to the amount of memory that is allocated to the ARC.

O3X uses Wired (non-pageable) memory for the ARC. Activity Monitor and top can display the total amount of Wired memory allocated. O3X is not the only user of wired memory, it is possible to examine the amount of memory (measured in bytes) in use by O3X by issuing the command:

- $ sysctl kstat.spl.misc.spl_misc.os_mem_alloc

Note that this value is more than likely greater than the configured maximum ARC size due to overheads in the allocator itself. By way of example on a 32GB iMac with the ARC set for 8GB.

- $ sysctl kstat | grep arc_max

- kstat.zfs.darwin.tunable.zfs_arc_max: 8589934592

- $ sysctl kstat.spl.misc.spl_misc.os_mem_alloc

- kstat.spl.misc.spl_misc.os_mem_alloc: 11866734592

Over the last several releases O3X has evolved the memory allocator technology in the SPL to closely match the Open Solaris allocator. This is has been driven by the OSX kernel allocator not being able to meet the demanding requirements of a full ZFS implementation. O3X 1.3.1 will by default allow the ARC to occupy most of your computers physical memory. In parallel with that it has a mechanism that detects when the computer is experiencing memory pressure and will release memory back to the OS for other purposes. This mechanism has been extensively tested, but may not be suitable for everyone.

It is possible to temporarily limit the size of the ARC by issuing a command:

- $ sudo sysctl -w kstat.zfs.darwin.tunable.zfs_arc_max=<size of arc in bytes> (O3X 1.3.1 RC and beyond)

- $ sudo sysctl -w zfs.arc_max=<arc size in bytes> (O3X 1.3.0)

With the final release of O3X 1.3.1 (not 1.3.1-RC5) will come a persistent configuration mechanism. Adding the following line to /etc/zfs/zsysctl.conf will cause the change to be automatically applied whenever the O3X kexts are loaded.

- kstat.zfs.darwin.tunable.zfs_arc_max=<size in bytes of arc>

For those that wish to monitor the size and performance of the ARC, the arcstat.pl script has been provided.

- $ arcstat.pl 1

If you are trying to reduce the amount of memory used by O3X "right now", there are two steps. First reduce the maximum ARC size to a value of your choosing:

- $ sudo sysctl -w kstat.zfs.darwin.tunable.zfs_arc_max=<size of arc in bytes>

Then force the SPL memory allocator to release memory back to the OS:

- $ sudo sysctl -w kstat.spl.misc.spl_misc.simulate_pressure=<amount of ram to release in bytes>

The second operation forces the SPL memory allocator to be reaped, which will release memory from the internal caches to the OS. This is a fairly coarse mechanism and may have to be repeated several times to achieve the desired effect. You can monitor the Activity Monitor, top and/or arcstat.pl to determine whether you have released sufficient memory. Note that this will likely result in ARC being further pressured, and commensurate amounts of cached data being evicted from main memory.

We keep our ARC implementation in alignment with the Illumos code. There have recently been some performance tuning changes to Illumos ARC, these will flow through to O3X in a future release.

Current Benchmarks

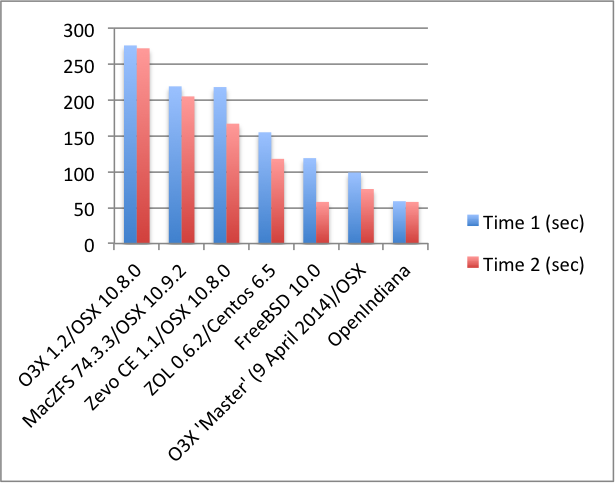

In order to establish a baseline of current performance of OpenZFS on OS X, measurements have been made using the iozone [1] benchmarking tool. The benchmark consists of running various ZFS implementations inside a VMware 6.0.2 VM on a 2011 iMac. Each VM is provisioned with 8GB of RAM, an OS boot drive, and a 5 GB second HDD containing a ZFS dataset. The HDDs are standard VMware .vmx files.

The test zpool was created using the following command:

- zpool create -o ashift=12 -f tank <disk device name>

The benchmark consists of the following steps:

- Start the VM.

- Import the tank dataset

- Execute -> mkdir /tank/tmp && cd /tank/tmp

- Execute -> time iozone -a

- Record Time 1

- Execute -> time iozone -a

- Record time 2

- Terminate the VM and VMware before moving to the next ZFS implementation/OS combination.

The results are as follows: